Introduction

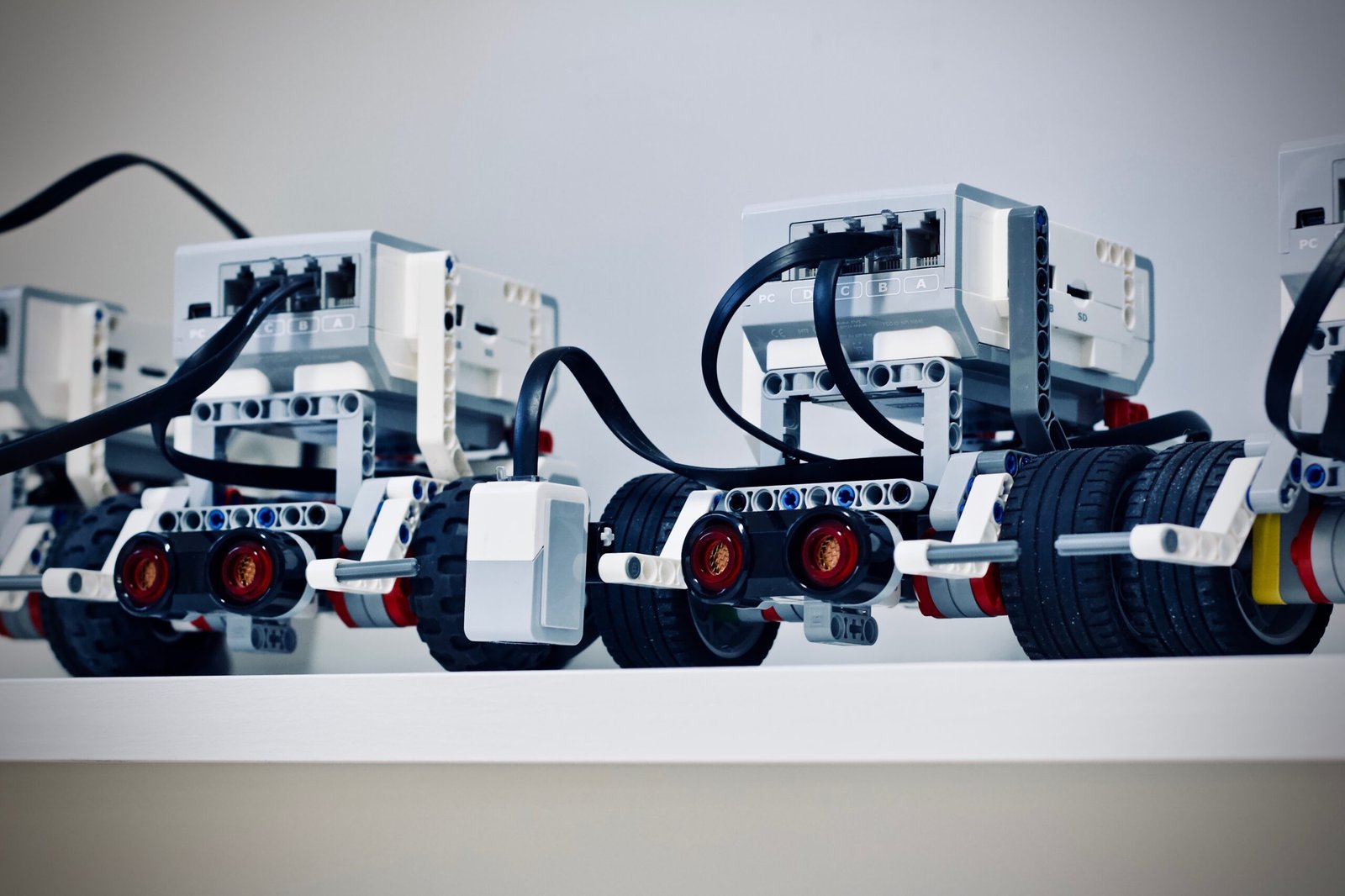

In the field of robotics, one of the most critical challenges is enabling robots to navigate their surroundings autonomously. While traditional methods rely on sensors and algorithms, a new approach called visual language maps is revolutionizing the way robots perceive and interact with their environment. In this blog post, we will explore the concept of visual language maps for robot navigation and discuss their potential applications and benefits.

What are Visual Language Maps?

Visual language maps are a novel way of representing spatial information for robot navigation. Unlike traditional maps that use symbols or coordinates, visual language maps rely on visual elements and patterns to convey information about the environment. These maps are designed to be easily interpretable by both humans and robots, allowing for seamless communication and collaboration.

Visual language maps are created by analyzing and processing visual data collected by robots. This data can come from various sources, such as cameras, lidar, or depth sensors. By leveraging computer vision techniques, robots can extract meaningful features from the visual data and map them onto a visual language representation.

Benefits of Visual Language Maps

Visual language maps offer several advantages over traditional navigation methods:

- Intuitive Interpretation: Visual language maps use visual elements that are familiar to humans, making them easy to understand and interpret. This allows for better collaboration between humans and robots, as both can communicate and reason about the environment using a shared visual language.

- Robustness: Visual language maps are more robust to changes in the environment compared to traditional maps. Since they rely on visual patterns rather than specific coordinates or landmarks, they can adapt to dynamic environments and handle variations in lighting conditions or object placement.

- Efficiency: By leveraging visual data, robots can navigate more efficiently and accurately. Visual language maps provide rich information about the environment, enabling robots to make informed decisions and choose optimal paths.

- Scalability: Visual language maps can be easily scaled to different environments and scenarios. Robots can learn and generalize visual patterns, allowing them to navigate in new and unfamiliar environments without the need for extensive training or mapping.

Applications of Visual Language Maps

The potential applications of visual language maps for robot navigation are vast and diverse:

- Indoor Navigation: Visual language maps can be used to guide robots in indoor environments such as homes, offices, or warehouses. By understanding the visual cues in the environment, robots can navigate efficiently and perform tasks such as object retrieval or room mapping.

- Outdoor Exploration: Visual language maps can also be applied to outdoor scenarios, enabling robots to navigate in natural environments or urban settings. This opens up possibilities for applications such as autonomous delivery robots or search and rescue missions.

- Collaborative Robotics: Visual language maps facilitate collaboration between humans and robots. By using a shared visual language, humans can provide high-level instructions to robots, such as “go to the red door” or “pick up the blue object,” without the need for complex programming or explicit commands.

- Assistive Robotics: Visual language maps can assist robots in interacting with humans in a more natural and intuitive way. For example, a robot could use visual cues to understand human gestures or expressions, enhancing its ability to provide assistance in healthcare or social settings.

Conclusion

Visual language maps represent a significant advancement in the field of robot navigation. By leveraging visual data and a shared visual language, robots can navigate their surroundings more efficiently, robustly, and intuitively. The applications of visual language maps are vast and have the potential to transform various industries. As research and development in this area continue to progress, we can expect to see more autonomous systems benefiting from the power of visual language maps.